They will fall over with the slightest provocation. There was one memorable moment where I called into the vendor who sold us our product and they literally did not even know what I was talking about for a few minutes until it finally clicked (after I said it multiple times) that I was talking about Azure Stack HCI. When you call into either your OEM vendor or Microsoft support, they hardly know what AzStackHCI is. There's very few experienced people (consultants/VARs) with the product who can help you design, implement, and troubleshoot the operations of a cluster. But the TL DR points of interest from my time using it in 2021/2022 are: I originally wanted to make a whole 10 page essay on our terrible experience with AzStackHCI but time slipped away and now my memories are too faint for it to be of much use. It's been over a year since I last touched Azure Stack HCI (at my previous job). with n+1, if a node dies, it is a panic to get it back up, but n+2 means that it can be worked on without fear of loss of functionality with the VMWare cluster. I also did n+2 for the ESXi nodes, which gives breathing room, similar to RAID 6. Even though one can overprovision RAM, in this case, I made sure that each VM was sized for RAM and vCPUs. CPU-wise, I want with more cores rather than fewer, more powerful cores, just to ensure each VM had its own vCPUs. I learned my lesson from using SD cards, so I went with BOSS cards for ESXi. That got connected and NFS mounted, which allowed for fast image to image backups.įor the hosts, I went with run of the mill Dell servers. It doesn't have two controllers, but it is cheaper, so is ideal for disk to disk backups via Veeam. If I had to focus on tuning, I'd probably create separate shares, but for this use, having one NFS share worked well enough.įor the backup NAS, I used a Black Pearl NAS, which is a single controller, ZFS machine. Since none of the tasks were crazy heavy, having the backend be RAID60 + hot spares + DRAM caching was good enough. For the storage protocol, I just went with NFS. Especially when 40gigE and 100gigE are becoming more common.

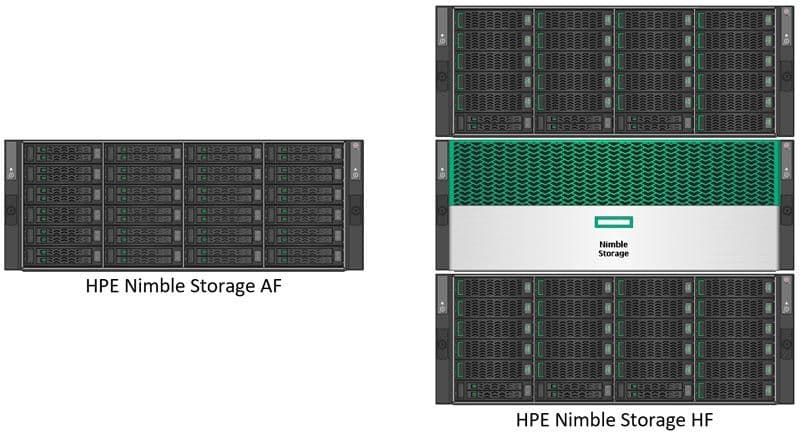

I went with Ethernet over FC because FC is becoming less used in the marketplace, so its cost over its benefits wind up being less. NetApp, JetStor, Promise, and others may not be first tier members, but they had units that did the job, had two controllers, snapshots, had disk encryption on the backend, etc.įor the storage fabric, I went with two decent Ethernet switches. Instead, I went with the usual three tier:įor the backend, I got with a VAR with a punch list, as they know more about what is up and coming than I do. I didn't go HCI because that meant a lot of work and relying on secret sauce. Recently, I had a similar cluster I needed to upgrade. It would be the minimum amount of hardware to replace and while it kicks the can down the road on our remaining hosts, we'd have over two years before they need to be replaced.Īny thoughts or other suggestions that I may be overlooking? As a result, I've been asked to look into a traditional SAN solution to replace it instead.Ĭonsidering what we have, the most cost-effective option would seem to replacing all 3 storage arrays with 2 new storage arrays, keeping the R740xd's while dropping the 3 other hosts, and adding in one new host. My original thought was to replace all of it with a new HCI cluster but there is a fear that it's "too much" for our environment of about 40 VMs. Our storage arrays are a Dell VNXe 3200 which is long out of support and a Unity 300 and HPE Nimble that are going up next year. Our environment has 5 hosts, two of which are out of support, a Dell R620 and two R630's along with two R740xd's that are still supported until late 2025.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed